Byline: Howie Xu

Cloud-edge-based proxy security services like the Zscaler Zero Trust Exchange rely on Machine Learning models to detect, identify, and block malicious traffic. Zscaler (my employer) processes more than 160 billion data transactions per day, the vast majority of which are quickly recognized as benign. But it’s the minority of remaining traffic (still a huge volume) that demands further analysis: How do we ensure nothing bad gets through?

Detection starts with domain analysis

Our Machine Learning-based traffic analysis begins with domain reputation assessment. (I wrote about that first-stage, lightweight model here.) Traffic emanates from domains. There are known and good domains and those are easily recognized. (For instance, BBC.com would be categorized as “News & Media.”) However, data invariably arrives from new or unknown domains (“Misc/Unknown” category), and some portions of those can be malicious.

Here at Zscaler, we use unsupervised learning on those Misc/Unknown category URLs. (More on that in my earlier article.) We calculate a domain-reputation score based on components like lexical analysis, referral and sequence, popularity, and ASN/WHOIS reputation. From there, Machine Learning algorithms adjust score weights to ensure final reputation scores follow a Gaussian distribution. This approach “clears” much of the data traffic for safe passage, but identifies a smaller number of “suspicious” domains requiring further attention and deeper analysis.

In this article, we’ll do a deep dive on one critical analysis we perform on those unknown data sources: deconstructing communication methods to detect previously unknown botnets.

All about bots, botnets, and command-and-control communications

A botnet is a series of machines or devices, all connected together, with each running one or more automated scripts (or “bots”). Often, this network of bots is composed of compromised machines, effectively hijacked to work in tandem to launch cyber attacks via remote control. Botnets can be used to perform Distributed Denial-of-Service (DDoS) attacks, exfiltrate data, or send spam. The attacker controls the hijacked botnet device and its connection using command-and-control (“C2”) software.

Threat actors mask botnet activity to evade detection. Data traffic from recognized botnets can be blocked. Unknown botnets, as the name suggests, are those for which security experts do not yet have a recognizable “signature” for detection. As more and more botnet-launched attacks succeed, more and more new botnets pop up, and the harder it is for security experts to keep up.

Machine Learning offers great promise for detecting unknown botnets. Machine Learning technology can be applied to identify unknown botnets based on their network communication, specifically by detecting the C2 channel for the new botnet.

Command-and-Control channel detection is a supervised training exercise with multiple steps, including data collection and labeling, feature engineering and modeling, and human-in-the-loop and lab testing.

Data collection and labeling

A supervised learning framework needs data and associated labels. For Zscaler, the data is the 160 billion transactions it manages per day generated by its diverse customer base. As for labels, Zscaler maintains a large botnet and non-botnet domain/URL database, employing its domain “verdicts” as labels for Machine Learning model training. The domain/URL verdicts derive from various sources, including third-party threat intelligence feeds, Zscaler sandbox infrastructure, and human reviews.

Feature engineering and modeling

Detecting a botnet’s C2 traffic is challenging: It’s typically low in volume, and threat adversaries disguise it to look like normal traffic (e.g. sent to a seemingly reputable destination). Machine Learning models work most effectively when they are based on established heuristics or rules — this enables data scientists to better leverage their own intuition.

It’s not quite that straightforward when we’re dealing with Command-and-Control traffic analysis. But looking at multiple aspects of network transactions with some rich context can deliver effective detection. Here at Zscaler, we examine network transaction domain details, including hostname, associated IP address, full URL string, user-agent string, and many more.

We extract the features based on spatial-temporal correlation over time among the network transaction domain hosts/users/companies. (In Machine Learning parlance, the “feature” is the useful and informative data “extracted” from the original network data transactions.) In this way, feature engineering is done by correlating the network events across time (temporal) and across the hosts, users, and companies (spatial).

For example, a botnet-infected host might trigger several different DNS requests to ping for a C2 server before establishing communication with it. In some cases, that behavior might appear normal if compared to a baseline associated with that particular host, but unusual if compared to the baseline established from a larger population of hosts.

After the features are obtained, we then train a tree-based machine learning model for each aspect (e.g., hostname-based transaction patterns, IP-based transaction patterns, URL, user-agent, etc.) and combine them together to produce a final prediction. (See Figure 1.)

Why not stack all the features together into a single predictive model? First, empirical evidence suggests that the “ensemble-type” architecture achieves higher accuracy. Second, the ensemble approach helps with the prediction’s “explainability”: When the model makes a positive prediction regarding a particular transaction related to a particular domain, we can assess each individual component score output by submodel and understand the logic behind the prediction.

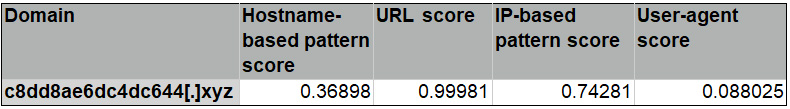

Table 1 below shows the example of a positive prediction by the Zscaler model and its corresponding scores output by each of the submodels. The scores are in the range from 0 to 1, where the higher value indicates the more suspicious. In this particular case, the model called out the domain c8dd8ae6dc4dc644[.]xyz because the URL looks very suspicious — the URL score is very high — which somewhat matches with the human impression.

Table 1: A positive predicted domain with associated submodel scores

Figure 1. Machine learning model architecture for C2 activity detection

Human-in-the-loop and lab testing

Machine Learning is good at spotting suspicious C2 activity based on transaction data. Yet human review can still be necessary to identify and confidently call out malicious C2 activity. At issue: It’s not feasible for human experts to review billions (or even, if the data is filtered, millions) of transactions per day. Instead, Zscaler employs a two-phase approach: The Machine Learning model outputs a shortlist of “high confidence” suspicious C2 domains based on transactions from within a specific time window, effectively filtering the transactions down to a manageable size for phase-two human review (and subsequent action). In the example above, “c8dd8ae6dc4dc644[.]xyz” was confirmed as a malicious C2 domain by security researchers.

Detecting the unknown: a process of continuous improvement

Machine Learning can detect and block unknown botnets via analysis of command-and-control channels. Zscaler leverages unsupervised learning techniques to shortlist suspicious domains for deeper analysis, and then uses supervised learning methods to detect botnet command-and-control channels with high confidence. Every day, the Zscaler Zero Trust Exchange blocks botnets that have never been seen before.

About the Author

Vice President of Machine Learning and AI

Vice President of Machine Learning and AI

Howie Xu is Vice President of Machine Learning and AI at Zscaler. Previously, he founded and headed VMware’s networking team for a decade, ran the entire engineering team for Cisco’s Cloud Networking & Services, and was the CEO and co-founder of TrustPath, which was acquired by Zscaler in 2018.